AI Readiness Assessment Framework

How to evaluate if an organization is ready for AI adoption across infrastructure, skills, and culture dimensions.

AI Readiness Assessment Framework

The question “Are we ready for AI?” is deceptively simple. The answer is never a binary yes or no. It’s a nuanced evaluation across multiple dimensions, each with its own maturity curve and investment requirements.

Having studied AI adoption patterns and built this assessment framework through research and practical application, I want to share an approach for evaluating organizational AI readiness—helping teams understand where they stand and what it takes to move forward.

What Does “AI Ready” Actually Mean?

Let me start by dismantling a common misconception: being “AI ready” doesn’t mean having cutting-edge infrastructure or a team of PhD researchers. It means having the foundational capabilities to successfully deploy, operate, and derive value from AI systems appropriate to your use cases.

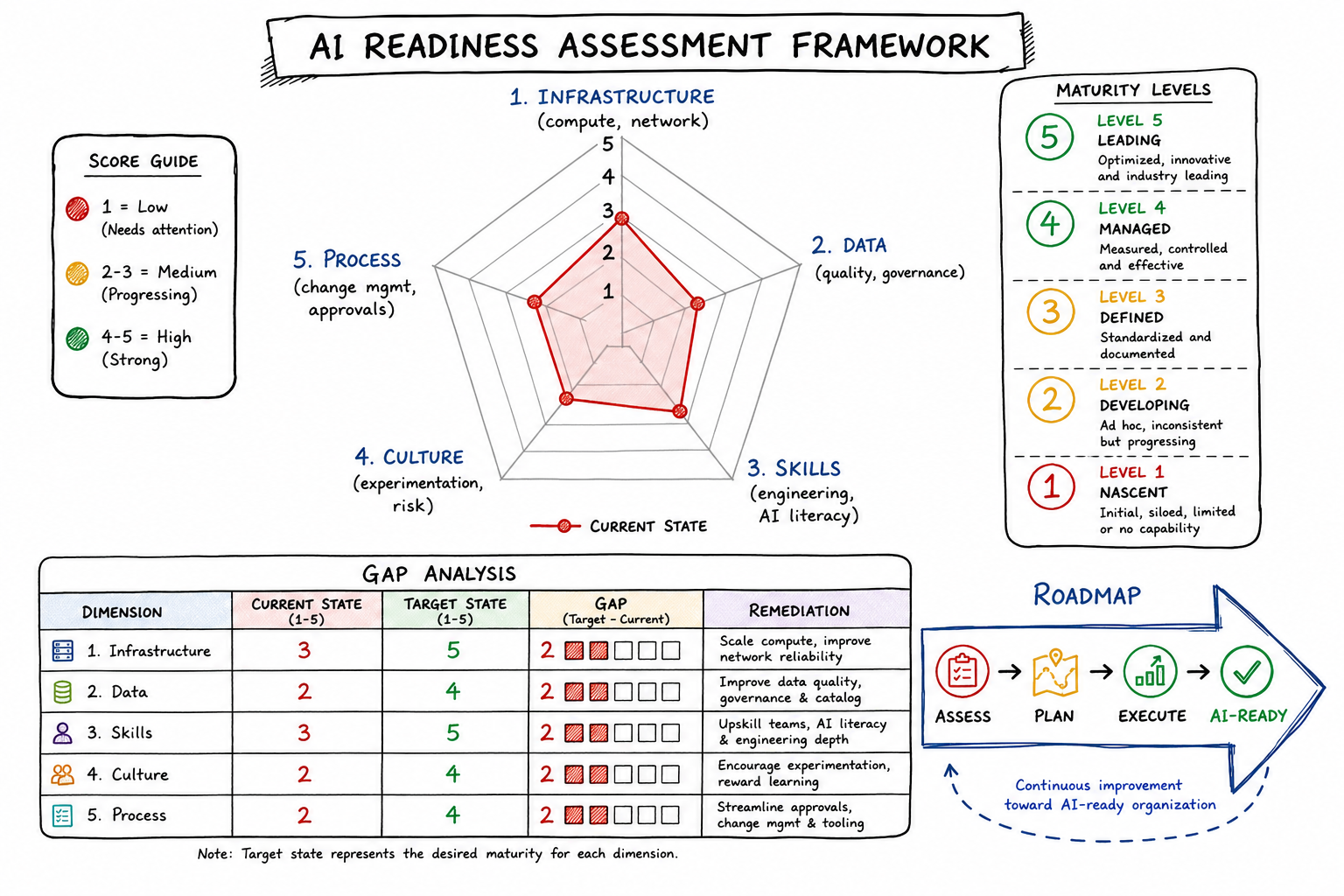

I define AI readiness across five interdependent dimensions:

- Infrastructure Readiness – The technical foundation

- Data Readiness – The fuel for AI systems

- Skills Readiness – The human capabilities

- Culture Readiness – The organizational mindset

- Process Readiness – The operational frameworks

An organization might score highly in one dimension while lagging in others. The gaps matter because AI initiatives fail at the weakest link. A company with world-class data infrastructure but rigid approval processes will struggle just as much as one with agile culture but poor data quality.

Infrastructure Readiness: Compute, Networking, Security

Infrastructure readiness evaluates whether your technical environment can support AI workloads. This isn’t just about raw compute power—it’s about the entire stack.

Key Assessment Areas

Compute Capabilities

- Do you have access to GPU/TPU resources for training and inference?

- Can you scale compute elastically based on workload demands?

- Is there a clear path from experimentation (notebooks) to production deployment?

Networking and Latency

- Can your network handle the data movement AI workloads require?

- For real-time inference, do you have the latency characteristics needed?

- Is there connectivity between data sources and compute resources?

Security and Compliance

- Do your security policies accommodate AI-specific risks (model theft, adversarial attacks)?

- Can you implement model access controls and audit trails?

- Are there clear boundaries for where sensitive data can be processed?

Infrastructure Readiness Rubric

| Level | Score | Characteristics |

|---|---|---|

| Nascent | 1 | No dedicated AI infrastructure. All workloads run on general-purpose systems. No GPU access. |

| Emerging | 2 | Ad-hoc GPU access (cloud or limited on-prem). Manual provisioning. Basic security policies. |

| Developing | 3 | Defined AI compute environment. Self-service provisioning for approved workloads. Security policies adapted for ML. |

| Mature | 4 | MLOps platform in place. Automated scaling. Integrated security and compliance monitoring. |

| Leading | 5 | Full AI platform with experiment tracking, model registry, automated deployment pipelines, and continuous monitoring. |

Data Readiness: Quality, Accessibility, Governance

I’ve seen more AI initiatives fail due to data issues than any other factor. Data readiness is often the longest pole in the tent.

Key Assessment Areas

Data Quality

- What percentage of critical data fields have documented quality metrics?

- Are there automated data validation pipelines?

- How quickly are data quality issues detected and resolved?

Data Accessibility

- Can data scientists access the data they need without multi-week procurement processes?

- Is data cataloged and discoverable?

- Are there self-service mechanisms for data exploration?

Data Governance

- Is there clarity on data ownership and stewardship?

- Are there policies for data retention, anonymization, and consent management?

- Can you trace data lineage from source to model prediction?

Data Readiness Rubric

| Level | Score | Characteristics |

|---|---|---|

| Nascent | 1 | Data siloed across systems. No catalog. Quality unknown. Access requires IT tickets and weeks of waiting. |

| Emerging | 2 | Some data consolidated. Basic documentation exists. Quality measured reactively. Access improving but inconsistent. |

| Developing | 3 | Data warehouse/lake in place. Catalog covers major datasets. Quality dashboards exist. Self-service for common needs. |

| Mature | 4 | Integrated data platform. Automated quality monitoring. Strong governance framework. Feature stores for ML. |

| Leading | 5 | Real-time data availability. Proactive quality management. Full lineage tracking. Data products mindset with clear SLAs. |

Skills Readiness: Engineering Capabilities, AI Literacy

Technology is only as good as the people who build and use it. Skills readiness spans both technical depth and organizational breadth.

Key Assessment Areas

Technical AI/ML Skills

- Do you have data scientists and ML engineers on staff?

- Can your team build, train, and deploy models end-to-end?

- Is there expertise in MLOps and production ML systems?

Engineering Foundation

- How mature are your software engineering practices?

- Do teams use version control, CI/CD, and automated testing?

- Is there experience with distributed systems and cloud-native architectures?

Organizational AI Literacy

- Do business stakeholders understand what AI can and cannot do?

- Can product managers effectively specify AI requirements?

- Is there awareness of AI ethics and responsible use considerations?

Skills Readiness Rubric

| Level | Score | Characteristics |

|---|---|---|

| Nascent | 1 | No AI/ML staff. Limited software engineering maturity. AI seen as magic or hype by business. |

| Emerging | 2 | A few data scientists, often isolated. Engineering practices inconsistent. Some AI curiosity but unrealistic expectations. |

| Developing | 3 | Dedicated AI/ML team. Solid engineering practices. Business understands basic AI concepts and limitations. |

| Mature | 4 | Embedded AI capabilities across teams. MLOps expertise. Business can articulate AI use cases with realistic scope. |

| Leading | 5 | AI skills integrated into career paths. Continuous learning culture. Business leaders drive AI strategy with technical fluency. |

Culture Readiness: Experimentation Mindset, Risk Tolerance

Culture is the invisible force that determines whether AI initiatives thrive or die. I assess culture through observable behaviors, not stated values.

Key Assessment Areas

Experimentation Mindset

- How does the organization respond to failed experiments?

- Are teams encouraged to test hypotheses before building full solutions?

- Is there tolerance for uncertainty in project outcomes?

Risk Tolerance

- How does leadership respond to AI-specific risks (bias, errors, unexplainability)?

- Are there frameworks for making decisions under uncertainty?

- Is there willingness to deploy imperfect solutions and iterate?

Cross-Functional Collaboration

- Do technical and business teams work together effectively?

- Is there shared accountability for AI outcomes?

- Can the organization break down silos when needed?

Culture Readiness Rubric

| Level | Score | Characteristics |

|---|---|---|

| Nascent | 1 | Failure punished. Waterfall dominance. Deep silos. AI seen as IT’s problem. Risk aversion extreme. |

| Emerging | 2 | Lip service to experimentation. Some agile adoption. Silos remain. AI interest but unclear ownership. |

| Developing | 3 | Safe-to-fail experiments accepted. Agile common. Cross-functional teams forming. AI has executive sponsorship. |

| Mature | 4 | Learning from failure celebrated. Rapid iteration normal. Strong cross-functional collaboration. AI integrated into strategy. |

| Leading | 5 | Experimentation embedded in DNA. Distributed decision-making. AI ethics actively debated. Organization adapts quickly to AI learnings. |

Process Readiness: Change Management, Approval Workflows

Even with perfect technology, data, skills, and culture, AI initiatives can stall in bureaucratic quicksand. Process readiness evaluates the operational machinery.

Key Assessment Areas

Change Management

- Is there a defined process for introducing AI-powered changes to workflows?

- How are affected employees prepared for AI-augmented work?

- Are there mechanisms to gather and incorporate user feedback?

Approval and Governance Workflows

- How long does it take to get an AI model approved for production?

- Are approval criteria clear and consistently applied?

- Is there a fast-track for low-risk applications?

Vendor and Procurement

- Can the organization evaluate and onboard AI vendors efficiently?

- Are contracts structured to allow experimentation?

- Is there flexibility in procurement for rapidly evolving AI tools?

Process Readiness Rubric

| Level | Score | Characteristics |

|---|---|---|

| Nascent | 1 | No AI-specific processes. Generic IT change management (if any). Procurement takes months. |

| Emerging | 2 | Ad-hoc AI processes. Change management inconsistent. Procurement improving but slow. |

| Developing | 3 | Defined AI governance framework. Change management includes AI considerations. Streamlined procurement for known vendors. |

| Mature | 4 | Risk-tiered approval processes. Integrated change management with training. Flexible procurement with pre-approved AI tools. |

| Leading | 5 | Continuous deployment for low-risk AI. Change management is proactive, not reactive. Procurement enables rapid experimentation. |

Assessment Methodology and Scoring

When I conduct an AI readiness assessment, I follow a structured methodology:

Phase 1: Stakeholder Interviews (1-2 weeks)

I interview 15-25 stakeholders across technical, business, and leadership roles. The goal is to understand both the formal state and the lived experience.

Sample questions:

- “Walk me through the last AI project you were involved in. What went well? What didn’t?”

- “If you needed access to customer data for an AI experiment tomorrow, what would you have to do?”

- “Tell me about a time a project failed. How did leadership respond?”

Phase 2: Technical Assessment (1-2 weeks)

I work with technical teams to evaluate actual capabilities, not just stated ones.

Activities include:

- Architecture review of data and compute infrastructure

- Code and process review of existing ML projects

- Tooling inventory and integration assessment

Phase 3: Scoring and Gap Analysis (1 week)

I score each dimension using the rubrics above and identify the most critical gaps.

Overall Readiness Score Calculation:

Overall Score = (Infrastructure × 0.15) + (Data × 0.25) + (Skills × 0.25) + (Culture × 0.20) + (Process × 0.15)The weights reflect my experience that data and skills are typically the highest-impact dimensions, but these can be adjusted based on organizational context.

Interpreting the Overall Score

| Score Range | Interpretation |

|---|---|

| 1.0 - 1.9 | Not Ready: Significant foundational work needed before AI initiatives |

| 2.0 - 2.9 | Early Stage: Can pursue limited, low-complexity AI use cases with external support |

| 3.0 - 3.9 | Developing: Ready for broader AI adoption with targeted investments |

| 4.0 - 4.5 | Mature: Well-positioned for advanced AI initiatives |

| 4.5 - 5.0 | Leading: Capable of cutting-edge AI and can be a competitive differentiator |

Common Gaps and How to Address Them

After many assessments, I’ve seen patterns in where organizations struggle:

Gap 1: Data Quality Debt

Symptom: High data readiness ambition but low actual quality scores. Root Cause: Years of underinvestment in data management treated as cost center. Address By: Start with a data quality initiative tied to specific AI use cases. Don’t try to boil the ocean—improve quality for the data that matters most to your priority initiatives.

Gap 2: The Skills Chasm

Symptom: Strong engineering but weak ML/AI capabilities. Root Cause: Difficulty recruiting AI talent; existing engineers haven’t been upskilled. Address By: Hybrid approach—hire a small core AI team while building a robust upskilling program. Partner with external firms for initial projects to build internal capability through collaboration.

Gap 3: Culture-Process Mismatch

Symptom: Leadership talks about experimentation but approval processes take months. Root Cause: Risk and compliance functions not aligned with AI ambitions. Address By: Engage risk and compliance early. Develop risk-tiered frameworks that allow fast-track for low-risk AI applications while maintaining appropriate governance for high-risk ones.

Gap 4: Infrastructure Fragmentation

Symptom: Data science team has their tools; engineering has different tools; no integration. Root Cause: Teams solved immediate needs without architectural coordination. Address By: Invest in a unified MLOps platform that bridges experimentation and production. This doesn’t mean one tool—it means integrated tools with clear handoffs.

Gap 5: Literacy Gaps

Symptom: Business asks for AI solutions to problems that don’t need AI, or expects AI to solve impossible problems. Root Cause: AI hype without education. Address By: Structured AI literacy programs for business stakeholders. Include both “what AI can do” and equally important, “what AI cannot do.”

Prioritizing Readiness Investments

With limited resources, how do you decide where to invest? I use a prioritization framework based on two factors:

- Gap Severity: How far below target is each dimension?

- Dependency Impact: How much does this dimension block progress in others?

Prioritization Matrix

| Low Dependency Impact | High Dependency Impact | |

|---|---|---|

| Large Gap | Important but can sequence later | Critical priority |

| Small Gap | Monitor and maintain | Address opportunistically |

In practice, Data Readiness almost always lands in the critical priority quadrant because poor data blocks everything else. You can have perfect infrastructure, brilliant people, and a great culture—but without quality, accessible data, nothing works.

Skills is typically the second priority because it has compounding effects. A skilled team can work around infrastructure limitations and drive culture change from within.

Investment Sequencing Example

For a typical organization scoring in the 2.0-2.5 range, I often recommend:

Quarter 1-2: Data foundation

- Data catalog implementation

- Quality monitoring for priority datasets

- Self-service access for approved use cases

Quarter 2-3: Skills building

- Hire/develop core AI team

- Engineering upskilling on ML fundamentals

- Business AI literacy program

Quarter 3-4: Platform and process

- MLOps platform implementation

- AI governance framework

- Streamlined approval workflows

Ongoing: Culture

- Celebrate experiments (including failures)

- Cross-functional AI project teams

- Executive AI education

Building a Roadmap from Assessment Results

The assessment deliverable I provide to clients includes:

- Executive Summary: Overall readiness score and top 3 priorities

- Dimension Deep-Dives: Detailed scoring with evidence and recommendations for each dimension

- Gap Analysis: Critical gaps mapped to specific remediation actions

- Investment Roadmap: Sequenced initiatives with dependencies and milestones

- Use Case Alignment: Which AI use cases are feasible now vs. after readiness improvements

Sample Roadmap Structure

PHASE 1: Foundation (Months 1-6)

├── Data quality program for priority datasets

├── AI team establishment (hire + upskill)

├── Executive AI literacy workshops

└── Milestone: First low-complexity AI use case in production

PHASE 2: Scale (Months 6-12)

├── MLOps platform implementation

├── AI governance framework rollout

├── Expanded data access and catalog

└── Milestone: 3+ AI use cases in production

PHASE 3: Optimize (Months 12-18)

├── Advanced ML capabilities (real-time, edge)

├── AI embedded in business processes

├── Continuous improvement culture

└── Milestone: AI contributing measurable business valueFinal Thoughts

AI readiness isn’t a destination—it’s a continuous journey. The organizations that succeed aren’t necessarily those that start with the highest scores. They’re the ones that honestly assess where they are, prioritize ruthlessly, and execute consistently.

When I finish an assessment, the most valuable outcome isn’t the score itself. It’s the shared understanding across leadership about what it will actually take to become an AI-capable organization. That alignment is the first step toward meaningful progress.

If you’re considering an AI readiness assessment for your organization, start by asking yourself: Are we ready to hear honest answers about where we stand? If yes, you’re already more ready than most.