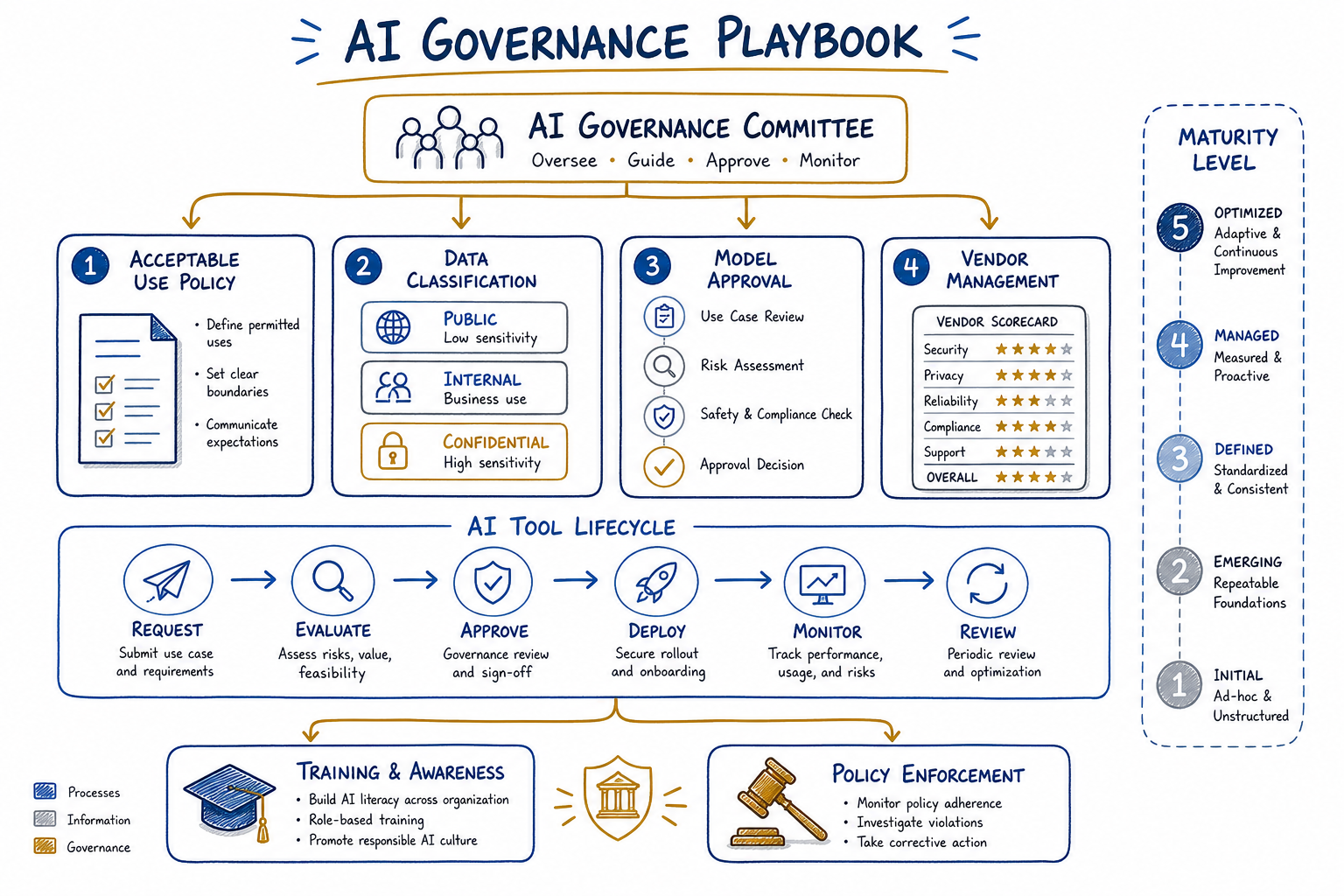

AI Governance Playbook for Organizations

Policies for acceptable use, data handling, model selection, and vendor management in enterprise AI adoption.

A pattern unfolds repeatedly in AI adoption: teams rush to deploy AI tools, productivity soars for a few weeks, and then something goes wrong. A sensitive document gets fed into a public model. An AI-generated contract clause creates liability. A vendor’s model changes behavior overnight. These incidents don’t happen because people are careless—they happen because organizations lack clear governance frameworks.

This playbook distills industry best practices and research into actionable policies and structures. Whether you’re just starting your AI journey or trying to bring order to existing chaos, these frameworks will help you move fast while managing risk appropriately.

Why AI Governance Matters Now

The urgency for AI governance stems from three converging forces.

First, AI adoption has outpaced organizational readiness. When ChatGPT launched, it reached 100 million users in two months. Your employees were among them—using AI for work tasks regardless of whether you had policies in place. Shadow AI is already in your organization.

Second, the risk profile of AI differs from traditional software. AI systems can generate plausible-sounding misinformation, inadvertently expose training data, produce biased outputs, and behave unpredictably when inputs shift. These aren’t theoretical concerns; they’re daily operational realities.

Third, the regulatory landscape is crystallizing rapidly. The EU AI Act, state-level legislation in the US, and industry-specific guidance from regulators mean that “we’ll figure it out later” is no longer viable. Organizations need documented governance to demonstrate compliance.

Good governance isn’t about saying no to AI. It’s about creating clear pathways to say yes—quickly and confidently—to appropriate uses while maintaining guardrails against genuine risks.

Acceptable Use Policies for AI Tools

Every organization needs a clear acceptable use policy (AUP) that employees can actually follow. I’ve seen 40-page policies that no one reads and single-paragraph policies that provide no guidance. The sweet spot is a tiered approach that categorizes uses by risk level.

Policy Template: AI Acceptable Use

Approved Uses (No Additional Approval Required)

- Drafting and editing internal communications

- Generating code for non-production environments

- Research and summarization of publicly available information

- Brainstorming and ideation sessions

- Learning and skill development

Conditional Uses (Requires Manager Approval)

- Customer-facing content generation (must be reviewed before publication)

- Code generation for production systems (must pass standard code review)

- Analysis of aggregated, anonymized business data

- Automation of repetitive internal processes

Restricted Uses (Requires Governance Committee Approval)

- Processing of personally identifiable information (PII)

- Analysis of confidential business data

- Customer-facing AI interactions (chatbots, recommendations)

- Automated decision-making affecting employees or customers

Prohibited Uses

- Input of trade secrets, source code, or proprietary algorithms into external AI systems

- Generation of content intended to deceive

- Circumvention of security controls or access restrictions

- Use of AI outputs without human review for legal, medical, or financial advice

- Processing of data beyond authorized classification levels

The key principle: match oversight intensity to risk level. Low-risk uses should flow freely; high-risk uses need appropriate checkpoints.

Data Classification and Handling with AI

AI governance requires rethinking data classification. Traditional schemes focus on confidentiality—who can access data. AI introduces new dimensions: can this data be used for training? Can it be processed by external systems? What happens to data after processing?

AI-Aware Data Classification Framework

| Classification | Definition | AI Processing Rules |

|---|---|---|

| Public | Information intended for public distribution | Any AI system, including external services |

| Internal | General business information | Approved AI systems only; no training consent |

| Confidential | Sensitive business information | On-premise or private cloud AI only; strict logging |

| Restricted | Highly sensitive data (PII, trade secrets, regulated data) | Air-gapped AI systems or prohibited entirely |

Data Handling Requirements

Before any data enters an AI system, teams must answer these questions:

- Classification verification: What is the data’s classification level? Has it been properly tagged?

- Processing authorization: Is this AI system approved for this classification level?

- Training consent: Does using this system grant the vendor training rights? Is that acceptable?

- Retention and deletion: How long will data persist in the AI system? Can it be deleted on request?

- Output classification: What classification level should AI outputs inherit?

I recommend establishing a simple decision tree that employees can follow without needing to consult legal for every interaction. The goal is informed, consistent decisions—not bureaucratic bottlenecks.

Model Selection Criteria and Approval Process

Not all AI models are created equal, and organizations need systematic approaches to evaluating and approving models for different use cases.

Model Evaluation Criteria

Capability Assessment

- Does the model perform the required task with acceptable accuracy?

- How does it handle edge cases and adversarial inputs?

- What are its known limitations and failure modes?

Security and Privacy

- Where is data processed and stored?

- What encryption is used in transit and at rest?

- Does the vendor have SOC 2, ISO 27001, or equivalent certifications?

- What are the data retention and deletion policies?

Compliance and Legal

- Does usage comply with relevant regulations (GDPR, HIPAA, etc.)?

- What are the intellectual property implications of outputs?

- Are there indemnification provisions for IP claims?

Operational Considerations

- What is the vendor’s uptime SLA?

- How are model updates and changes communicated?

- What happens if the vendor discontinues the service?

- Is there a path to bring capabilities in-house if needed?

Approval Process Template

Tier 1: Individual productivity tools (1-week approval)

- Requestor completes self-assessment checklist

- Security team validates vendor security posture

- Automatic approval if checklist passes

Tier 2: Team-level tools (2-week approval)

- Tier 1 requirements plus:

- Data flow diagram showing what data enters the system

- Manager sign-off on use case appropriateness

Tier 3: Enterprise or customer-facing deployments (4-week approval)

- Tier 2 requirements plus:

- Governance committee review

- Legal review of terms and conditions

- Proof of concept with synthetic data

- Incident response plan specific to the deployment

Vendor Evaluation Framework for AI Services

AI vendors require scrutiny beyond typical software procurement. Models can change behavior between versions, training data provenance is often opaque, and the competitive landscape shifts rapidly.

Vendor Assessment Categories

Technical Due Diligence

- Model architecture and training data sources (to extent disclosed)

- Benchmark performance on relevant tasks

- API stability and versioning practices

- Integration complexity and maintenance burden

Security and Compliance

- Third-party audit reports and certifications

- Penetration testing results

- Incident history and response track record

- Subprocessor and data location disclosures

Business Viability

- Financial stability and funding runway

- Customer concentration and market position

- Product roadmap alignment with your needs

- Exit provisions and data portability

Ethical Considerations

- Transparency about model limitations

- Approach to bias testing and mitigation

- Content moderation and safety measures

- Stance on controversial use cases

Vendor Scorecard Template

| Category | Weight | Score (1-5) | Weighted Score |

|---|---|---|---|

| Task performance | 25% | ||

| Security posture | 25% | ||

| Data handling practices | 20% | ||

| Business stability | 15% | ||

| Ethical alignment | 15% | ||

| Total | 100% |

Minimum threshold for approval: 3.5 weighted score. Any category below 2.5 requires escalation regardless of total score.

Training and Awareness Programs

Policies without training are just documents. Effective AI governance requires building organizational capability—helping people understand not just the rules but the reasoning behind them.

Training Program Structure

Foundational Training (All Employees)

- What AI is and isn’t (dispelling myths)

- Organization’s AI vision and acceptable use policy

- Data classification refresher with AI-specific scenarios

- How to report concerns or request guidance

- Duration: 1 hour, annual refresh

Role-Specific Training

For Managers

- Evaluating AI use case requests

- Monitoring team AI usage for policy compliance

- Handling AI-related performance questions

- Duration: 2 hours, with quarterly updates

For Technical Staff

- Secure AI development practices

- Prompt injection and other AI-specific vulnerabilities

- Code review considerations for AI-generated code

- Model evaluation and testing methodologies

- Duration: 4 hours, with monthly technical briefings

For Procurement and Legal

- AI vendor contract red flags

- Intellectual property considerations

- Regulatory landscape overview

- Duration: 3 hours, with regulatory update sessions

Awareness Campaign Elements

Training alone isn’t sufficient. Ongoing awareness keeps AI governance top of mind:

- Monthly “AI in Practice” newsletter with case studies and policy clarifications

- Slack/Teams channel for AI questions with governance team presence

- Quarterly town halls on AI strategy and governance updates

- Recognition program for employees who identify governance improvements

Governance Committee Structure and Responsibilities

AI governance can’t be owned by a single function. Security cares about data protection. Legal worries about liability. Business units want productivity gains. IT manages infrastructure. A cross-functional committee balances these perspectives.

Committee Composition

| Role | Responsibility | Time Commitment |

|---|---|---|

| Executive Sponsor (C-level) | Strategic direction, resource allocation, escalation resolution | Monthly meeting + ad-hoc |

| Chair (AI/Data leader) | Agenda setting, decision documentation, policy maintenance | 8-10 hours/week |

| Security Representative | Risk assessment, security requirements, incident response | 4-6 hours/week |

| Legal/Compliance Representative | Regulatory compliance, contract review, liability assessment | 4-6 hours/week |

| Business Unit Representatives (2-3) | Use case prioritization, practical feedback, adoption support | 2-4 hours/week |

| IT/Infrastructure Representative | Technical feasibility, integration, operations | 2-4 hours/week |

| HR Representative | Training, workforce implications, employee concerns | 2-4 hours/week |

Committee Responsibilities

Strategic

- Define and update AI governance policies

- Approve enterprise-wide AI tools and platforms

- Set risk tolerance and escalation thresholds

- Report to board/executive team on AI governance posture

Operational

- Review and approve Tier 3 use cases

- Adjudicate policy interpretation questions

- Investigate and respond to AI-related incidents

- Monitor regulatory developments and adjust policies

Enabling

- Remove blockers for approved AI initiatives

- Identify and share best practices across teams

- Sponsor training and awareness programs

- Advocate for appropriate AI investment

Meeting Cadence

- Weekly standup (30 min): New requests, urgent issues, blockers

- Monthly deep dive (2 hours): Policy reviews, strategic initiatives, metrics review

- Quarterly board report (preparation + presentation): Governance posture, incidents, roadmap

Policy Enforcement Mechanisms

Policies without enforcement become suggestions. But heavy-handed enforcement kills innovation. The right approach uses technical controls where possible and graduated human intervention where necessary.

Technical Controls

Preventive Controls

- Network-level blocking of unapproved AI services

- DLP rules flagging sensitive data in AI tool contexts

- API gateways logging and filtering AI service calls

- Approved AI tools provisioned through enterprise SSO

Detective Controls

- Cloud access security broker (CASB) monitoring for shadow AI

- Log aggregation for approved AI tool usage patterns

- Anomaly detection for unusual data volumes to AI services

- Periodic audits of AI-generated content in key workflows

Graduated Response Framework

| Violation Severity | Examples | Response |

|---|---|---|

| Minor (unintentional) | Using unapproved tool for low-risk task | Coaching conversation, training reminder |

| Moderate | Repeated minor violations, policy circumvention attempts | Written warning, mandatory retraining |

| Serious | Exposing confidential data to external AI | Formal investigation, potential discipline |

| Severe | Malicious use, gross negligence with restricted data | Immediate access revocation, disciplinary action up to termination |

Documentation Requirements

Every enforcement action should be documented:

- What policy was violated?

- What was the actual or potential impact?

- How was the violation detected?

- What response was taken?

- What controls could prevent recurrence?

This documentation feeds continuous improvement of both policies and controls.

Handling Policy Violations

When violations occur—and they will—response speed and consistency matter. I recommend a playbook approach that categorizes incidents and prescribes initial response steps.

Incident Classification

Category 1: Data Exposure An employee or system sent data above the authorized classification level to an AI service.

Initial Response:

- Determine what data was exposed and its classification

- Identify the AI service and its data retention practices

- Request data deletion from vendor if possible

- Assess whether regulatory notification is required

- Document for lessons learned

Category 2: Unauthorized AI Use An employee used a prohibited AI tool or used an approved tool for a prohibited purpose.

Initial Response:

- Suspend the employee’s access to the AI tool

- Determine the scope and duration of unauthorized use

- Assess what data was processed

- Follow graduated response framework based on severity

Category 3: AI Output Harm AI-generated content caused harm (misinformation published, biased decision, customer complaint).

Initial Response:

- Contain the harm (retract content, reverse decision, etc.)

- Identify the AI system and prompts involved

- Determine whether the issue is model behavior or user error

- Implement immediate guardrails to prevent recurrence

Post-Incident Review

Every significant incident should trigger a blameless post-mortem:

- What happened and what was the impact?

- What policies or controls were supposed to prevent this?

- Why did those policies or controls fail?

- What changes to policies, controls, or training are needed?

- Who is responsible for implementing changes and by when?

Evolving Governance as AI Capabilities Change

The hardest part of AI governance is that the target keeps moving. Models that were science fiction two years ago are now commodities. Today’s policies will be insufficient for tomorrow’s capabilities.

Governance Evolution Triggers

Review and update governance when:

- Major new AI capabilities emerge (new modalities, significantly improved performance)

- Regulatory requirements change

- A significant incident occurs (internal or publicized elsewhere)

- Annual review cycle (at minimum)

- Business strategy shifts (new markets, products, or risk appetite)

Future-Proofing Strategies

Principle-Based Policies Write policies around principles (protect confidential data, maintain human oversight for consequential decisions) rather than specific tools. Specific tools go in appendices that can be updated more easily.

Emerging Technology Watch Assign someone to monitor AI developments and brief the governance committee quarterly on capabilities that might require policy updates.

Scenario Planning Periodically run tabletop exercises: “What if an AI could perfectly mimic any employee’s writing style?” “What if AI-generated code becomes indistinguishable from human-written code?” These exercises reveal governance gaps before they become incidents.

Stakeholder Feedback Loops Create easy channels for employees to report governance friction. If people are constantly working around policies, the policies might need adjustment—or they might need better explanation.

Maturity Model for AI Governance

| Level | Characteristics | Focus Areas |

|---|---|---|

| 1 - Ad Hoc | No formal policies, reactive to incidents | Establish basic acceptable use policy, form committee |

| 2 - Developing | Basic policies exist, inconsistent enforcement | Standardize approval processes, implement technical controls |

| 3 - Defined | Comprehensive policies, trained workforce | Optimize processes, measure effectiveness, handle edge cases |

| 4 - Managed | Metrics-driven governance, continuous improvement | Predictive risk management, industry leadership |

| 5 - Optimizing | Governance enables competitive advantage | Shape industry standards, governance as enabler not constraint |

Most organizations I work with are between levels 1 and 2. The goal isn’t to leap to level 5 overnight—it’s to make steady progress appropriate to your AI adoption pace and risk profile.

Getting Started

If you’re reading this and realizing your organization has governance gaps, don’t panic. Start with these steps:

- Assess current state: What AI tools are people actually using? What data is flowing into them?

- Establish basic AUP: Even a simple policy is better than none. Start with the prohibited list.

- Form a working group: You don’t need a formal committee on day one. Get security, legal, and a business leader in a room.

- Pick a pilot: Apply governance rigor to one high-value, moderate-risk use case. Learn from it.

- Iterate: Governance is never done. Build the muscle of continuous improvement.

AI governance isn’t about preventing AI adoption—it’s about enabling it responsibly. Organizations that get this right will move faster than those paralyzed by uncertainty, and more safely than those who ignore the risks.

The playbook I’ve shared here is a starting point. Your organization’s specific context—industry, risk tolerance, AI maturity, regulatory environment—will shape how you adapt these frameworks. The principles remain constant: match oversight to risk, enable the workforce with clear guidance, enforce consistently, and evolve continuously.

The organizations that thrive in the AI era won’t be those that adopted fastest or those that waited longest. They’ll be the ones that built governance muscles allowing them to adopt confidently, adjust quickly, and maintain trust with customers, employees, and regulators along the way.