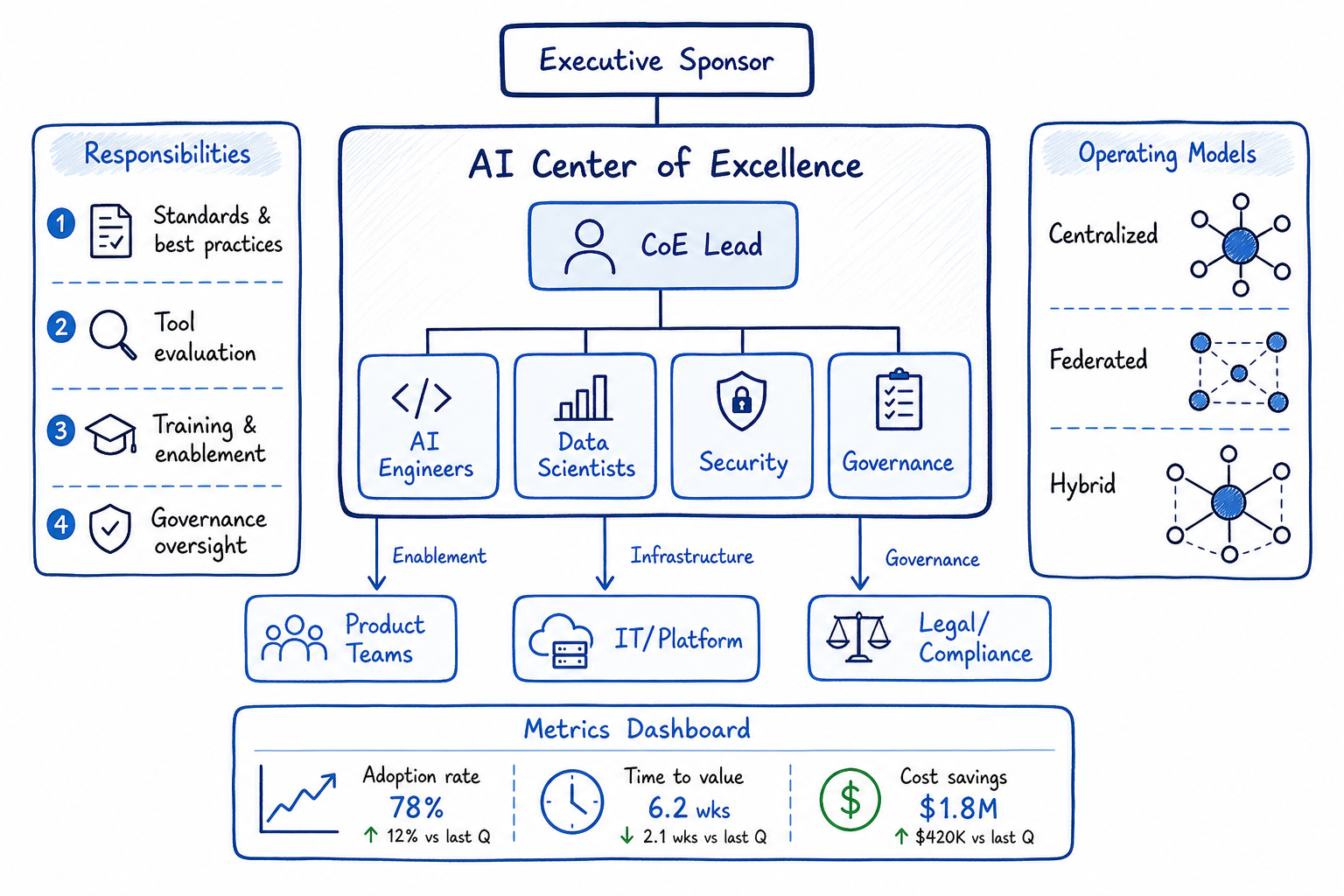

Building an AI Center of Excellence

Team structure, responsibilities, and success metrics for an organizational AI Center of Excellence.

Building an AI Center of Excellence

As AI adoption accelerates, many organizations are asking: do we need a dedicated team to coordinate our AI efforts? Having researched this extensively and observed patterns across the industry, I’ve seen AI Centers of Excellence thrive as engines of innovation—and I’ve seen them struggle as bureaucratic bottlenecks. The difference almost always comes down to structure, mandate, and organizational alignment.

In this post, I’ll share a framework for building AI CoEs that actually work, based on industry patterns and my research into what separates successful implementations from failed ones.

What is an AI Center of Excellence (CoE)?

An AI Center of Excellence is a dedicated organizational unit responsible for driving AI adoption, establishing best practices, and ensuring AI initiatives deliver business value. Think of it as the central nervous system for your organization’s AI efforts—coordinating, enabling, and sometimes directly executing AI projects.

A well-functioning AI CoE serves three primary functions:

- Enablement: Making it easier for teams across the organization to build and deploy AI solutions

- Governance: Ensuring AI is used responsibly, ethically, and in compliance with regulations

- Strategy: Aligning AI investments with business priorities and maintaining a coherent technology roadmap

The CoE is not meant to be a gatekeeper that controls all AI activity. The most effective CoEs position themselves as service providers to the rest of the organization—making teams more successful rather than policing their work.

When Does an Organization Need an AI CoE?

Not every organization needs a formal AI CoE. In my experience, you should consider establishing one when:

- Multiple teams are experimenting with AI and you’re seeing duplication of effort, inconsistent approaches, or wheel reinvention

- AI projects are moving to production and you need consistent standards for deployment, monitoring, and maintenance

- Regulatory pressure is increasing around AI use in your industry

- Executive leadership is asking for a coherent AI strategy rather than ad-hoc projects

- You’re spending significant budget on AI and need to demonstrate ROI across the portfolio

- Talent is scarce and you need to maximize the impact of your AI specialists

If you have a single AI team working on one or two projects, a full CoE is probably overkill. Start with lightweight coordination and formalize as you scale.

Team Composition: Roles and Skills Needed

The composition of your AI CoE depends on your operating model (more on that below), but here’s a typical structure I recommend for a mid-to-large organization:

Core Team Structure

┌─────────────────────────┐

│ Head of AI CoE │

│ (VP/Director level) │

└───────────┬─────────────┘

│

┌───────────────────────┼───────────────────────┐

│ │ │

▼ ▼ ▼

┌───────────────┐ ┌─────────────────┐ ┌─────────────────┐

│ AI Strategy & │ │ AI Platform & │ │ AI Governance │

│ Enablement │ │ Engineering │ │ & Ethics │

└───────┬───────┘ └────────┬────────┘ └────────┬────────┘

│ │ │

▼ ▼ ▼

• AI Program Mgrs • ML Engineers • AI Ethics Lead

• AI Trainers • MLOps Engineers • Compliance Analysts

• Solution Architects • Data Engineers • Risk Assessors

• Business Analysts • Platform Engineers • Policy WritersKey Roles Explained

Head of AI CoE: This person needs to be senior enough to have influence across the organization. They should combine technical credibility with business acumen and strong stakeholder management skills. I’ve seen CoEs fail when led by someone who is purely technical or purely business-focused.

AI Program Managers: These are the connective tissue of the CoE. They track AI initiatives across the organization, identify opportunities for collaboration, and ensure projects stay aligned with strategy.

ML Engineers: Your technical core. They may work on shared platforms, consult on difficult problems, or embed with product teams for strategic initiatives.

MLOps Engineers: Critical for scaling AI beyond prototypes. They build and maintain the infrastructure for model deployment, monitoring, and lifecycle management.

AI Ethics Lead: Not optional in 2026. This person ensures your AI systems are fair, transparent, and aligned with your values. They also navigate the evolving regulatory landscape.

Solution Architects: Help product teams design AI solutions that fit your architecture standards and leverage existing capabilities.

Sizing Guidelines

As a rough guide, I typically recommend:

| Organization Size | AI Maturity | CoE Size |

|---|---|---|

| < 1,000 employees | Early | 3-5 people |

| 1,000-10,000 | Early | 5-10 people |

| 1,000-10,000 | Mature | 10-20 people |

| > 10,000 | Mature | 20-50+ people |

These numbers assume a hybrid operating model. Centralized models require larger CoEs; federated models can get by with smaller central teams.

Responsibilities: Governance, Enablement, Standards, Training

Governance

AI governance is about ensuring AI is developed and used responsibly. Your CoE should own:

- AI policy framework: What types of AI use cases are permitted? What requires review?

- Risk assessment process: How do you evaluate the risk of an AI system before deployment?

- Model inventory: What AI models are running in production? Who owns them?

- Compliance monitoring: Are your AI systems meeting regulatory requirements?

- Incident response: What happens when an AI system behaves unexpectedly?

I recommend a tiered review process based on risk:

┌─────────────────────────────────────────────────────────────┐

│ AI Project Tiers │

├─────────────────────────────────────────────────────────────┤

│ Tier 1 (Low Risk) │ Internal tools, non-critical │

│ Self-service │ decisions, no PII │

├────────────────────────┼────────────────────────────────────┤

│ Tier 2 (Medium Risk) │ Customer-facing, influences │

│ Light review │ decisions, uses sensitive data │

├────────────────────────┼────────────────────────────────────┤

│ Tier 3 (High Risk) │ Autonomous decisions, regulated │

│ Full review │ domains, significant impact │

└────────────────────────┴────────────────────────────────────┘Enablement

This is where CoEs often provide the most tangible value:

- Reusable components: Pre-built models, feature stores, prompt libraries

- Reference architectures: Proven patterns for common use cases

- Consulting services: Expert help for teams tackling difficult problems

- Vendor management: Evaluating and managing relationships with AI vendors

- Tool provisioning: Making AI development tools available across the org

Standards

Document and maintain standards for:

- Model development lifecycle

- Data quality requirements

- Testing and validation procedures

- Deployment and monitoring practices

- Documentation requirements

- Security and privacy controls

Training

Your CoE should offer:

- Executive education: Help leaders understand AI capabilities and limitations

- Technical training: Upskill engineers on ML/AI technologies

- AI literacy programs: Help business users understand how to work with AI

- Hands-on workshops: Practical experience building AI solutions

- Certification programs: Validate skills and ensure quality

Relationship with Product Teams and IT

One of the biggest challenges I see is defining the right relationship between the AI CoE and other teams. Get this wrong, and you’ll either have a CoE that’s ignored or one that becomes a bottleneck.

With Product Teams

┌──────────────────┐ ┌──────────────────┐

│ Product Team │◄───────►│ AI CoE │

└──────────────────┘ └──────────────────┘

│ │

│ • Requirements │ • Technical guidance

│ • Domain expertise │ • Standards compliance

│ • User context │ • Reusable components

│ • Prioritization │ • Specialist support

│ │

└────────────────────────────┘

│

▼

┌───────────────┐

│ AI Solution │

└───────────────┘What works: CoE as consultant and enabler. Product teams own their AI solutions but leverage CoE expertise, platforms, and standards.

What doesn’t work: CoE as mandatory approval gate for all AI work. This creates bottlenecks and resentment.

With IT/Platform Teams

The AI CoE needs a clear interface with central IT:

- Infrastructure: IT typically owns compute, networking, and security. The CoE specifies AI-specific requirements.

- Data platforms: Partner closely with data engineering teams on data access and quality.

- Security: Collaborate on AI-specific security concerns (model theft, adversarial attacks, etc.).

- Operations: Define how AI systems integrate with existing monitoring and incident management.

I recommend regular syncs between CoE leadership and IT leadership to avoid territorial conflicts.

Operating Models: Centralized vs Federated vs Hybrid

There’s no one-size-fits-all answer here. The right model depends on your organization’s size, culture, and AI maturity.

Centralized Model

┌─────────────────────┐

│ AI CoE │

│ (all AI talent) │

└─────────┬───────────┘

│

┌─────────────────────┼─────────────────────┐

│ │ │

▼ ▼ ▼

┌───────────────┐ ┌───────────────┐ ┌───────────────┐

│ Business │ │ Business │ │ Business │

│ Unit A │ │ Unit B │ │ Unit C │

│ (no AI) │ │ (no AI) │ │ (no AI) │

└───────────────┘ └───────────────┘ └───────────────┘Pros: Maximum efficiency, consistent standards, easier to maintain expertise

Cons: Can become bottleneck, disconnected from business context, slower response

Best for: Smaller organizations, early AI maturity, highly regulated industries

Federated Model

┌───────────────┐ ┌───────────────┐ ┌───────────────┐

│ Business │ │ Business │ │ Business │

│ Unit A │ │ Unit B │ │ Unit C │

│ (own AI) │ │ (own AI) │ │ (own AI) │

└───────┬───────┘ └───────┬───────┘ └───────┬───────┘

│ │ │

└─────────────────────┼─────────────────────┘

│

┌─────────▼───────────┐

│ AI CoE (light) │

│ Standards only │

└─────────────────────┘Pros: Fast, contextually aware, business units have ownership

Cons: Duplication of effort, inconsistent practices, harder to share learnings

Best for: Large organizations with mature AI capabilities distributed across BUs

Hybrid Model (Recommended)

┌─────────────────────┐

│ AI CoE │

│ Platform, Stds, │

│ Governance, CoP │

└─────────┬───────────┘

│

┌─────────────────────┼─────────────────────┐

│ │ │

▼ ▼ ▼

┌───────────────┐ ┌───────────────┐ ┌───────────────┐

│ Business │ │ Business │ │ Business │

│ Unit A │ │ Unit B │ │ Unit C │

│ + AI team │ │ + AI team │ │ + AI team │

└───────────────┘ └───────────────┘ └───────────────┘

│ │ │

└─────────────────────┼─────────────────────┘

│

┌─────────▼───────────┐

│ Community of │

│ Practice (CoP) │

└─────────────────────┘Pros: Balances efficiency with business alignment, scales well

Cons: More complex to operate, requires clear role definitions

Best for: Most mid-to-large organizations

In this model, the CoE focuses on:

- Platform and infrastructure

- Standards and governance

- Training and enablement

- Strategic initiatives

- Community of practice facilitation

Business units own their AI teams and solutions but operate within CoE standards and leverage CoE platforms.

Success Metrics for an AI CoE

You need to measure the CoE’s impact to justify continued investment and identify areas for improvement. Here are the metrics I track:

Adoption Metrics

- Number of AI projects in production

- Number of teams using AI capabilities

- Platform utilization rates

- Training completion rates

Efficiency Metrics

- Time from idea to production for AI projects

- Reuse rate of shared components

- Cost per model deployed

- Support ticket volume and resolution time

Quality Metrics

- Model performance in production

- Incident rate for AI systems

- Compliance audit results

- Technical debt levels

Business Impact Metrics

- Revenue influenced by AI

- Cost savings from AI automation

- Customer satisfaction improvements

- Employee productivity gains

Sample CoE Dashboard

┌─────────────────────────────────────────────────────────────┐

│ AI CoE Dashboard │

├────────────────────────┬────────────────────────────────────┤

│ Models in Production │ ████████████████░░░░ 47 (+8 QoQ) │

│ Teams Using AI │ ██████████████████░░ 23 (+5 QoQ) │

│ Avg Time to Deploy │ ████████░░░░░░░░░░░░ 32 days │

│ Platform Uptime │ ████████████████████ 99.7% │

│ Training Completions │ ██████████████░░░░░░ 340 this Q │

│ Estimated Value Add │ ████████████████░░░░ $12.4M │

└────────────────────────┴────────────────────────────────────┘Common Anti-Patterns to Avoid

I’ve seen CoEs fail in predictable ways. Here are the anti-patterns to watch for:

1. The Ivory Tower

Symptom: CoE becomes disconnected from real business problems, focuses on interesting technology rather than business value.

Fix: Require CoE members to spend time with product teams. Tie CoE goals to business outcomes.

2. The Bottleneck

Symptom: All AI work must go through the CoE, creating massive queues and frustration.

Fix: Move to a tiered model. Enable self-service for low-risk work. Reserve CoE involvement for high-impact or high-risk initiatives.

3. The Science Project Factory

Symptom: Lots of POCs and experiments, very few production deployments.

Fix: Measure deployments, not just experiments. Require business sponsorship for projects. Kill projects that don’t have a path to production.

4. The Standards Police

Symptom: CoE focused on enforcement rather than enablement. Teams try to avoid CoE involvement.

Fix: Reframe CoE as service provider. Measure customer satisfaction. Make compliance easy rather than mandatory.

5. The Talent Hoarder

Symptom: All good AI talent sits in the CoE, leaving product teams without capability.

Fix: Rotate talent between CoE and product teams. Help product teams hire their own AI talent.

6. The Shadow AI Problem

Symptom: Teams building AI solutions outside the CoE because engaging is too hard.

Fix: This is a signal that your CoE is creating friction. Simplify engagement. Offer more value.

Getting Executive Sponsorship

Without strong executive sponsorship, your CoE will struggle to get resources, influence decisions, or drive change. Here’s how I approach this:

Identify the Right Sponsor

Look for an executive who:

- Has budget authority

- Is respected across the organization

- Understands AI’s potential (or is willing to learn)

- Has patience for medium-term investments

Typically this is the CTO, CDO, or a business unit president. Avoid sponsorship at too low a level—you need someone who can break down organizational barriers.

Build the Business Case

Focus on:

- Current state pain: Duplicated effort, inconsistent quality, compliance risk

- Opportunity cost: Projects not happening because capability is lacking

- Competitive pressure: What competitors are doing with AI

- Risk mitigation: Regulatory exposure without proper governance

Quantify where possible. “We’re spending $5M annually on AI across 12 teams with no coordination” is more compelling than “we need better AI practices.”

Start with Quick Wins

Don’t ask for a 50-person team on day one. Start small:

- Document current AI landscape

- Identify 2-3 high-impact opportunities

- Propose a pilot CoE with a small team

- Deliver measurable results

- Use success to justify expansion

Maintain Sponsorship

Keep your sponsor engaged through:

- Regular updates (monthly or quarterly)

- Clear metrics showing progress

- Early warning on challenges

- Visibility into high-profile successes

Evolving the CoE as AI Matures in the Org

Your CoE’s role should change as AI capability matures across the organization.

Stage 1: Establish (Year 1)

Focus: Build foundation

- Create initial standards and governance

- Set up basic platform capabilities

- Deliver first successful projects

- Build core team

CoE Role: Hands-on execution

Stage 2: Scale (Years 2-3)

Focus: Expand adoption

- Mature platform and tools

- Train AI champions across the org

- Move to hybrid operating model

- Establish community of practice

CoE Role: Balance execution with enablement

Stage 3: Optimize (Years 3-4)

Focus: Efficiency and impact

- Optimize platform costs

- Increase reuse and self-service

- Focus on highest-value initiatives

- Strengthen governance as scale increases

CoE Role: Primarily enablement

Stage 4: Embed (Years 4+)

Focus: AI becomes mainstream

- AI skills distributed widely

- Product teams self-sufficient for most use cases

- CoE focuses on cutting-edge capabilities and governance

- Consider whether separate CoE is still needed

CoE Role: Governance, advanced R&D, strategic initiatives

CoE Direct Involvement

▲

│ ┌───┐

High │ │ │

│ ┌┘ └┐

│ ┌┘ └┐

│ ┌┘ └┐

Low │ ┌┘ └───────────────────

│─┴──────────────────────────────►

Year 1 Year 2 Year 3 Year 4+

Establish Scale Optimize EmbedBudget and Resource Considerations

Let’s talk money. Here’s how I think about budgeting for an AI CoE.

Budget Categories

| Category | % of Budget | Notes |

|---|---|---|

| Personnel | 60-70% | Your biggest investment |

| Platform & Infrastructure | 15-25% | Compute, tools, MLOps |

| Training & Development | 5-10% | Upskilling programs |

| External Support | 5-10% | Consultants, contractors |

Typical Budget Ranges

For a mature hybrid CoE:

| Organization Size | Annual CoE Budget |

|---|---|

| Mid-size (1K-5K employees) | $2-5M |

| Large (5K-20K employees) | $5-15M |

| Enterprise (20K+ employees) | $15-50M+ |

These numbers include personnel costs but not project-specific costs (which should be funded by business units).

Funding Models

Central funding: CoE budget comes from corporate. Pros: stability, independence. Cons: may be seen as overhead.

Chargeback: Business units pay for CoE services. Pros: CoE must prove value. Cons: can discourage adoption.

Hybrid: Core capabilities centrally funded, project work charged back. This is my preferred model.

Making the Case for Budget

When requesting budget, frame it in terms of:

- Avoided cost: “Without a CoE, each team will build their own ML platform at $X”

- Accelerated value: “CoE can cut time-to-production by 50%, getting value sooner”

- Risk reduction: “Proper governance reduces regulatory risk exposure by $X”

- Talent efficiency: “Shared expertise means we need 30% fewer AI specialists”

Conclusion

Building an effective AI Center of Excellence is challenging but achievable. The organizations that get it right gain a significant competitive advantage—they can move faster, with better quality, and at lower risk than those with fragmented AI efforts.

Key takeaways:

- Start with clear purpose: Know whether you’re optimizing for enablement, governance, or both

- Right-size for your stage: Don’t over-build early, but don’t under-invest as you scale

- Choose the right operating model: Hybrid works for most, but tailor to your culture

- Focus on value, not control: The best CoEs make teams successful, not dependent

- Measure what matters: Track adoption, efficiency, quality, and business impact

- Evolve over time: Your CoE’s role should change as AI matures in your org

- Secure executive sponsorship: Without it, you’ll struggle to drive change

If you’re building an AI CoE, I’d love to hear about your experience. What’s working? What challenges are you facing? The field is evolving rapidly, and we all benefit from sharing what we learn.